27

27

Selected publications.

A few highlights — for the full record see the live BibTeX index further down or Google Scholar.

27

27

11

11

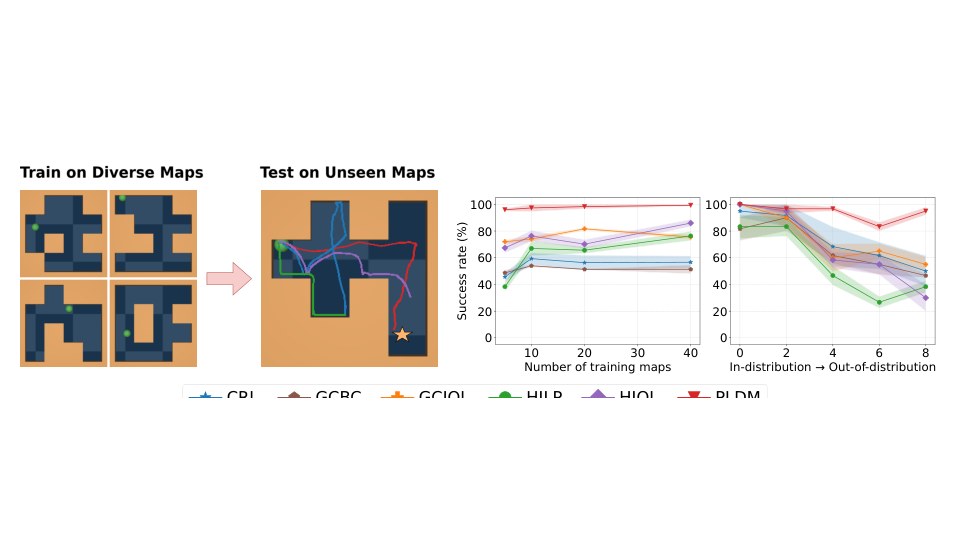

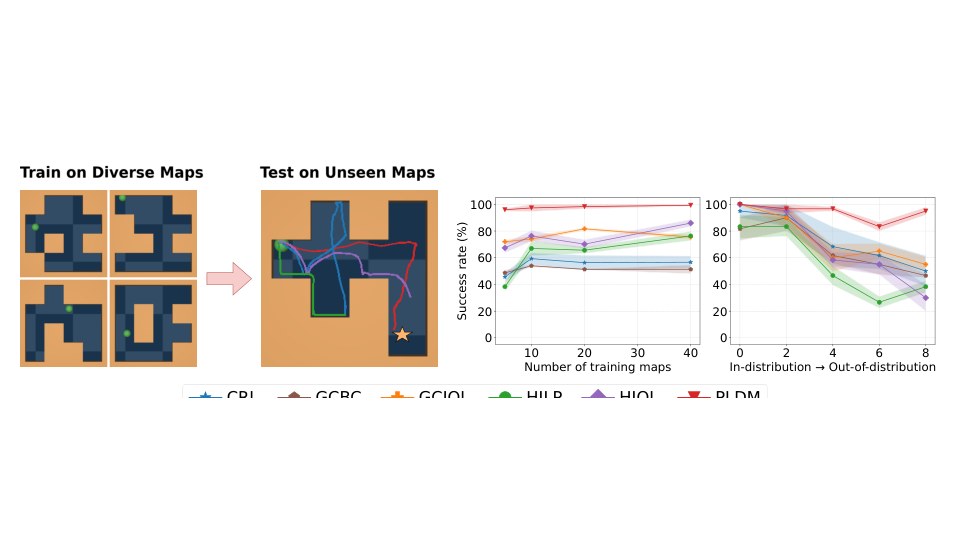

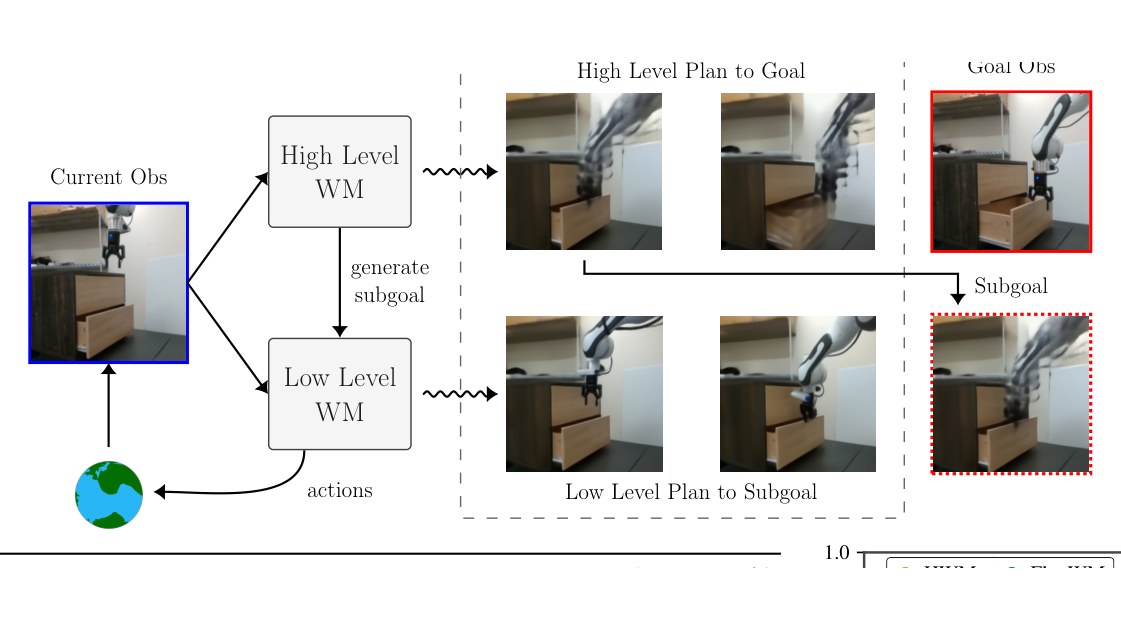

Hierarchical Planning with Latent World Models

Latent world models learned at multiple temporal scales — enabling zero-shot, long-horizon robotic control (70% pick-and-place success vs 0% for single-scale baselines).

19

19

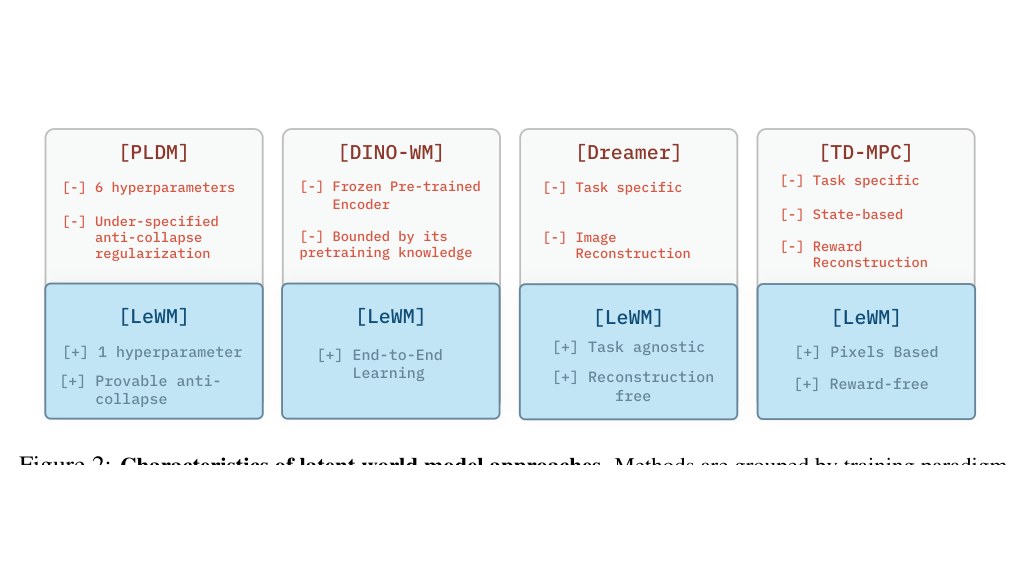

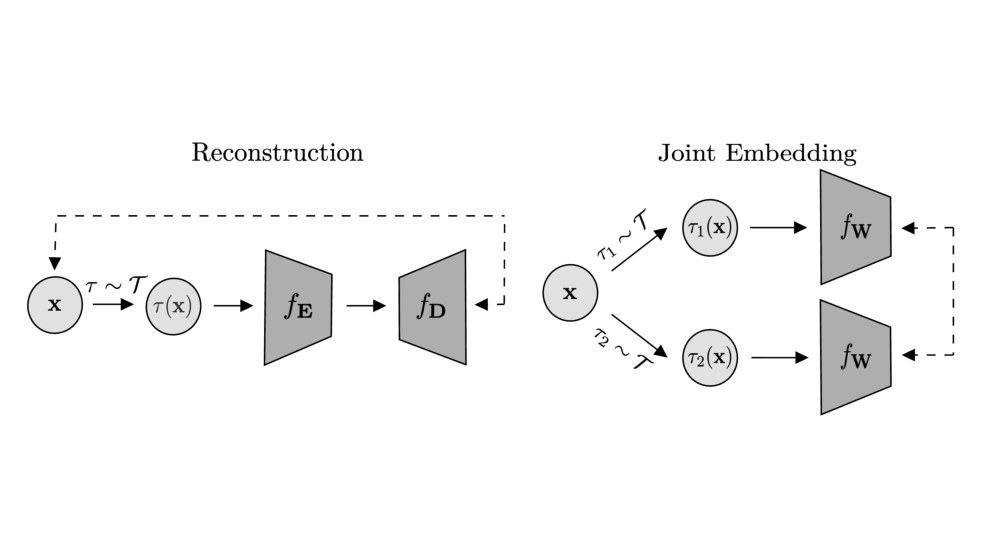

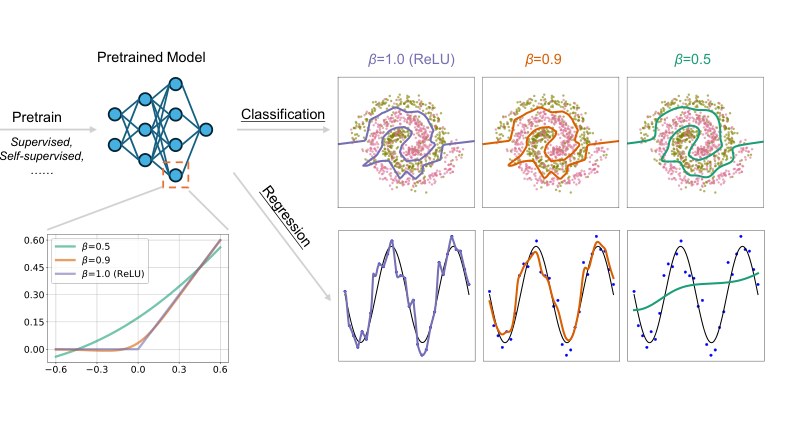

Joint-Embedding vs Reconstruction: Provable Benefits of Latent Space Prediction for SSL

Closed-form analysis of when JEPA wins over reconstruction: latent prediction is strictly preferred when irrelevant features dominate the input signal.

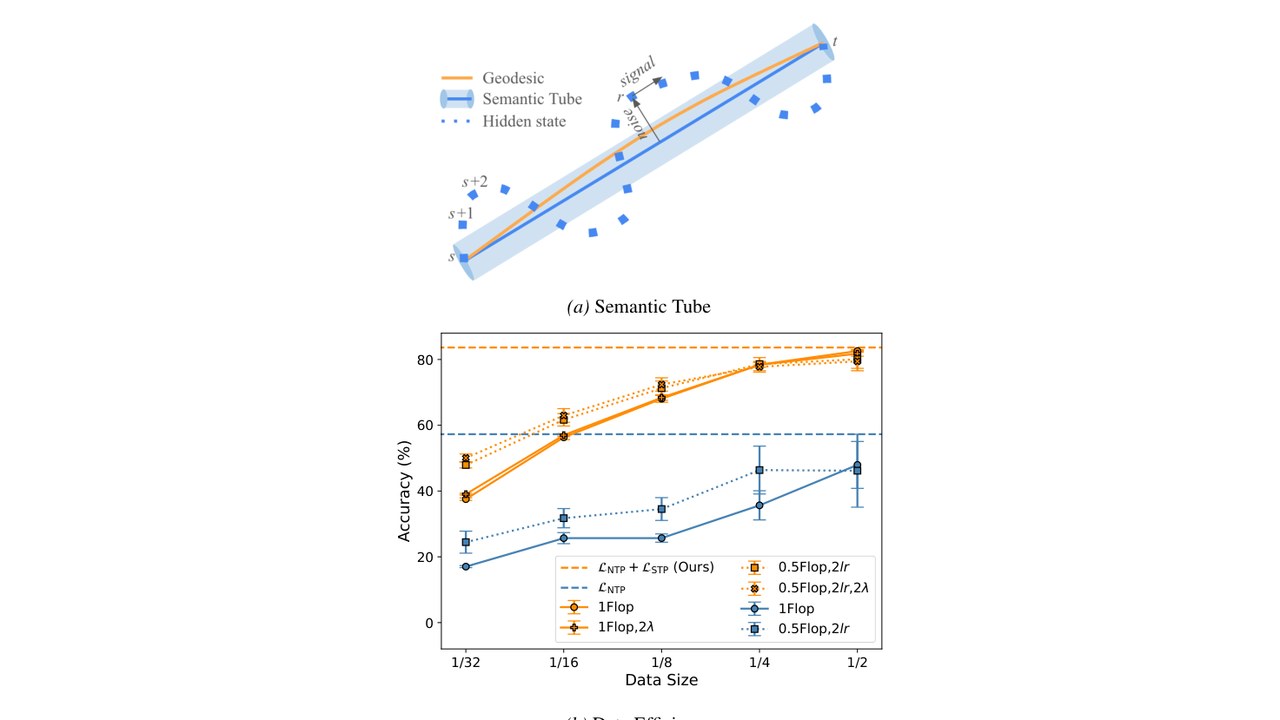

Semantic Tube Prediction: Beating LLM Data Efficiency with JEPA

Extending JEPA to language. Constrains hidden trajectories to a tube around the geodesic — drastically reducing the data needed to fine-tune LLMs. With Huang and LeCun.

705

705

68

68

266

266

Clustering Earthquake Signals and Background Noise in Continuous Seismic Data with Unsupervised Deep Learning

Deep wavelet representations for time-series — deployed in NASA's Mars SEIS mission for marsquake detection. Demonstrates learnable signal processing at the scale of real geophysical data.

185

185